This is pretty slick, but doesn’t this just mean the bots hammer your server looping forever? How much processing do you do of those forms for example?

Daniel Quinn

Canadian software engineer living in Europe.

- 1 Post

- 64 Comments

10·8 days ago

10·8 days agoDepending on how complicated you’re willing to allow it to be to run locally, you could just run a webserver right on the desktop. Bind it to

localhost:8000so there’s no risk of someone exploiting it via the network, anf then your startup script is just:- Start webserver

- Open browser to http://localhost:800/

It’s not smooth, or professional-looking, but it’s easy ;-)

If you want something a little more slick, I would probably lean more toward “Path 2” as you call it. The webserver isn’t really necessary after all, since you’re not even using a network.

One option that you might not have considered however could be to rewrite the whole thing in JavaScript and port it to a static web page. Hosting costs on something like that approaches £0, but you have to write JavaScript :-(

You might be interested in this project where someone has hooked up a low-power system to Mastodon and is tooting through it stories about the experience. The project author may also be worth contacting.

What exactly are you self-hosting that’s gobbling up that much data? I’ve been self-hosting my website for decades and haven’t used that much over all that time let alone in one month.

Most of my bandwidth consumption is from torrents and downloading Steam games, but even that doesn’t get me to even 1tb/month.

3·27 days ago

3·27 days agoYou can’t really make them go idle, save by restarting them with a do-nothing command like

tail -f /dev/null. What you probably want to do is scale a service down to 0. This leaves the declaration that you want to have an image deployed as a container, “but for right now, don’t stand any containers up”.If you’re running a Kubernetes cluster, then this is pretty straightforward: just edit the deployment config for the service in question to set

scale: 0. If you’re using Docker Compose, I believe the value to set is calledreplicasand the default is1.As for a limit to the number of running containers, I don’t think it exists unless you’re running an orchestrator like AWS EKS that sets an artificial limit of… 15 per node? I think? Generally you’re limited only by the resources availabale, which means it’s a good idea to make sure that you’re setting limits on the amount of RAM/CPU a container can use.

21·1 month ago

21·1 month agoNope, pipx definitely can’t do that, but the idea that running your

yourscript.py --helpwill automatically trigger the downloading of dependencies and installing them somewhere isn’t really appealing. I’m sure I’m not the only person who’s got uv configured to install the virtualenv in the local.venvfolder rather than buried into my home dir, so this would come with the added surprise that every time I invoke the script, I’d get a new set of dependencies installed wherever I happen to be.I mean, it’s neat that you can do this, but as a user I wouldn’t appreciate the surprise behaviour. pipx isn’t perfect, but at least it lets you manage things like updates.

31·1 month ago

31·1 month agoThis looks like a reimplementation of pipx.

Syncthing on Android will be discontinued, and there’s a fork already, which as I said above, I use.

deleted by creator

I guess it’s been a while then. Syncthing works perfectly for me, with the official latest version in Arch, the older version in Debian, the flatpak on Ubuntu, and the forked version on Android, syncing all my Joplin data all over the place.

I don’t much care for the file format though. The appeal of Git Journal is strong.

Joplin + Syncthing has been great for me. Sync across multiple devices with no third party in between. However the “sharing” in this context is limited to other installations of the entire db. To my knowledge, there’s no way to say “sync these notes with my wife, and these others with my phone only” etc.

2·2 months ago

2·2 months agoEach Pi 4 has 8GB of RAM. With six devices, that’s 48GB to play with. More than enough for my needs.

5·2 months ago

5·2 months agoActually, as a web guy, I find the ARM architecture to be more than sufficient. Most of the stuff I build is memory heavy and CPU light, so the Pi is great for this stuff.

19·2 months ago

19·2 months agoThey’re fanless and low-power, which was the primary draw to going this route. I run a Kubernetes cluster on them, including a few personal websites (Nginx+Python+Django), PostgreSQL, Sonarr, Calibre, SSH (occasionally) and every once in a while, an OpenArena server :-)

57·2 months ago

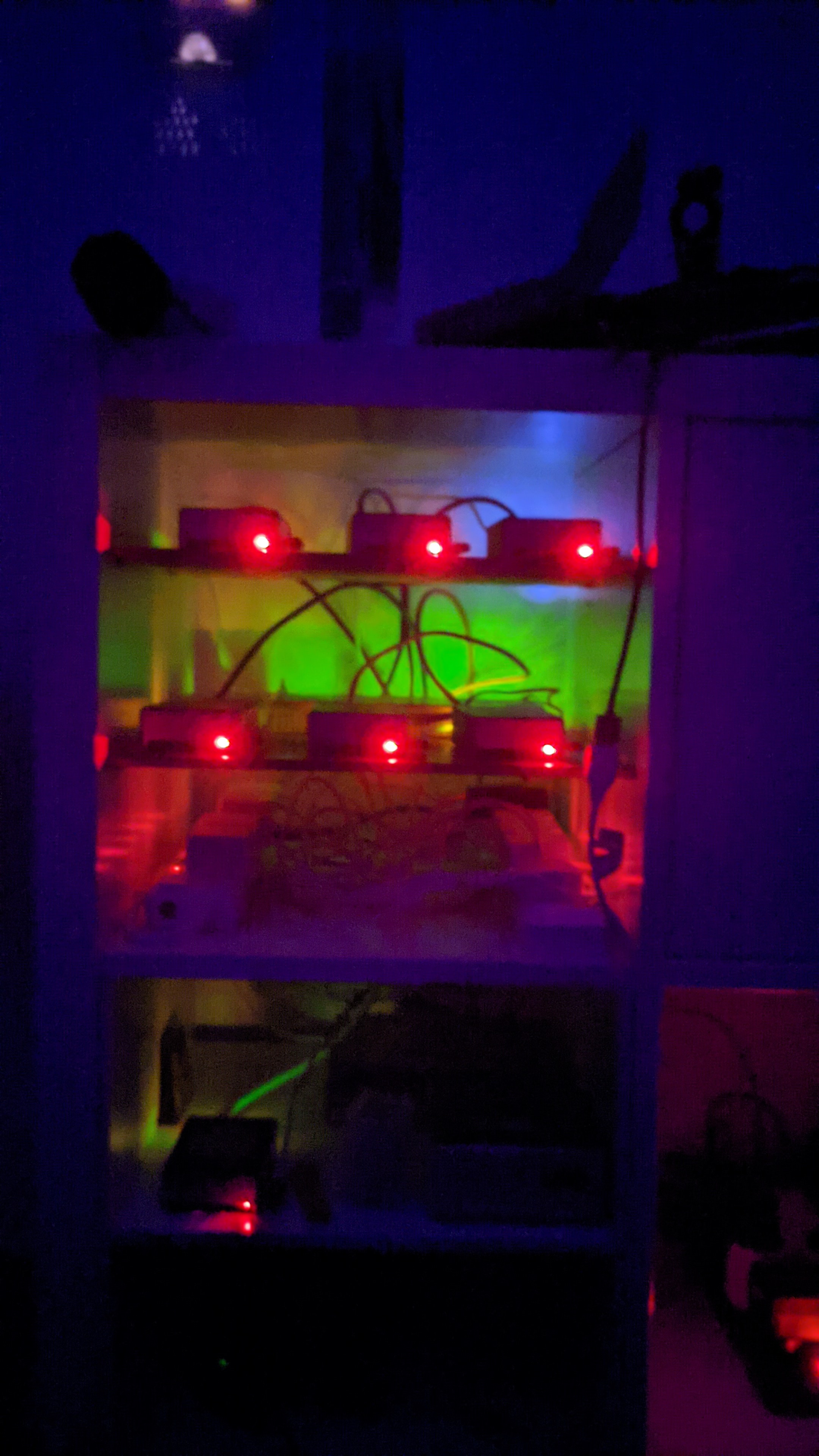

57·2 months agoSeven Raspberry Pi 4’s and one Pi Zero, mounted on some tile “shelves” inside some IKEA furniture.

1·2 months ago

1·2 months agoMonolith has the same problem here. I think the best resolution might be some sort of browser-plugin based solution where you could say “archive this” and have it push the result somewhere.

I wonder if I could combine a dumb plugin with Monolith to do that… A weekend project perhaps.

1·2 months ago

1·2 months agoMonolith can be particularly handy for this. I used it in a recent project to archive the outgoing links from my own site. Coincidentally, if anyone is interested in that, it’s called django-cool-urls.

12·2 months ago

12·2 months agoYou probably want to look into Health Checks. I believe you can tell Docker to “start service B when service A is healthy”, so you can define your health check with a script that depends on Tailscale functioning.

4·3 months ago

4·3 months agoSo my first impression is that the requirement to copy-paste that elaborate SQL to get the schema is clever but not sufficiently intuitive. Rather than saying “Run this query and paste the output”, you say “Run this script in your database” and print out a bunch of text that is not a query at all but a one-liner Bash script that relies on the existence of

pbcopy– something that (a) doesn’t exist on many default installs (b) is a red flag for something that’s meant to be self-hosted (why am I talking to a pasteboard?), and (c) is totally unnecessary anyway.Instead, you could just say: “Run this query and paste the result in this box” and print out the raw SQL only. Leave it up to the user to figure out how they want to run it.

Alternatively you can also do something like: “Run this on your machine and copy/paste the output”:

$ curl 'https://app.chartdb.io/superquery.sql' | psql --user USERNAME --host HOSTNAME DBNAMEIn the case of the cloud service, it’s also not clear if the data is being stored on the server or client side in

LocalStorage. I would think that the latter would be preferable.

Ooh! I have something for this.